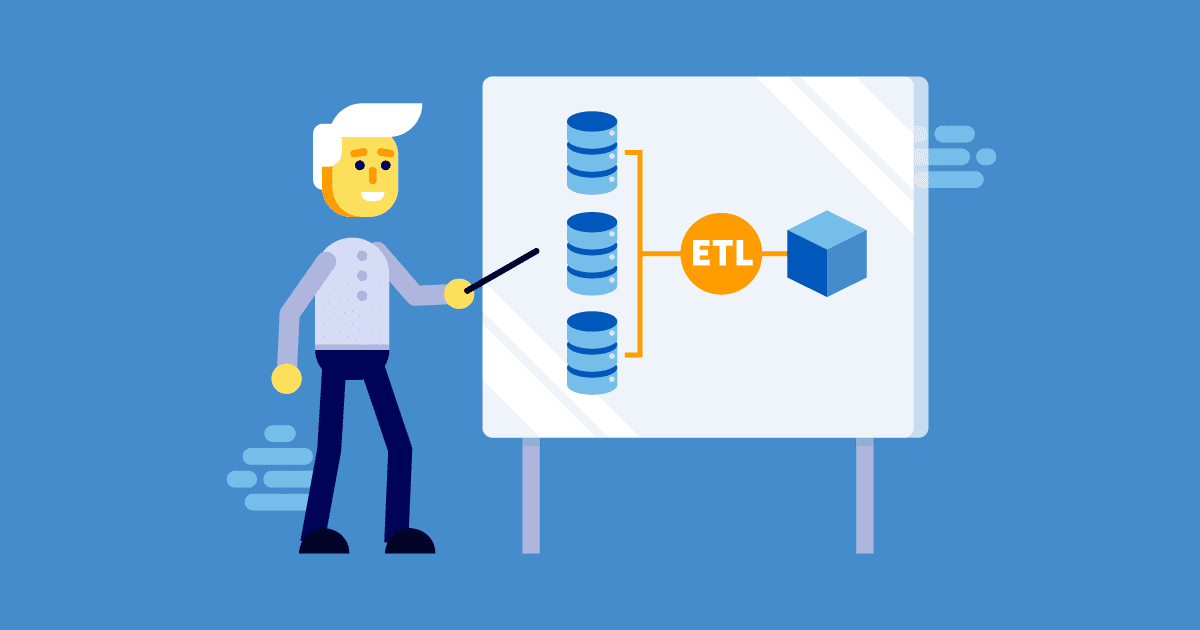

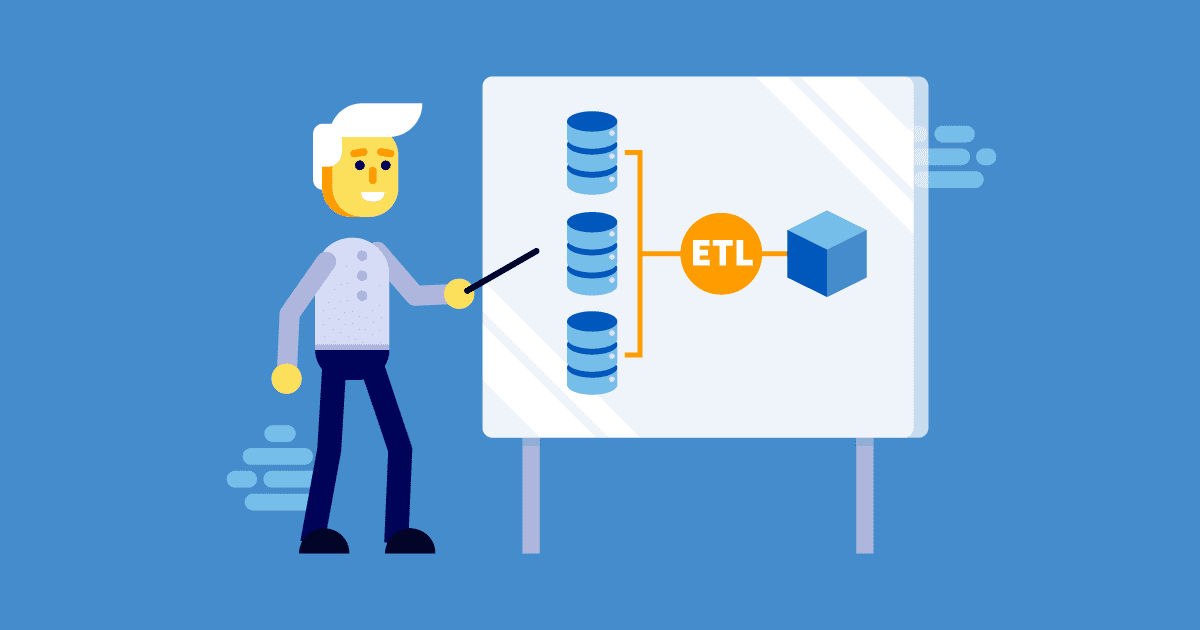

The letters ETL stand for Extract, Transform, Load, which describes an integration that combines these three database functions into one process.

It is used for extracting data from various sources, converting the raw data to fit into a conventional data schema before exporting and storing it in a data warehouse which can be accessed for various business intelligence needs. Although we refer to a data warehouse, it is really just a collection of databases where the information is available for various data analysis purposes. ETL involves a lot of complex data and process, and because of this, will need a lot of testing too. Let’s find out more about it.

Steps in the ETL Process

If you are wondering how the ETL process fits into a real-life scenario, let’s take a look at an example. Meet Joe. He works for a pharmaceutical company, and over the years, has worked with numerous departments within the company, including sales, HR and business development. Each department is so vast that they keep their own databases for managing employee records, but Joe’s company wants to analyze his historical data to find out how he has performed over the past few years. Since his records are held within the different department databases, the only way that all this data can be extracted and bound together for full analysis is by using the ETL process and tools. Below, we describe in detail how this is done.

Extract:

As mentioned above, the first step in the ETL process is extracting the data from various data sources. These data sources can be a third party database such as Oracle DB, MS SQL or even a CSV file.

Transform:

The extracted data will then be transformed into conventional schematic data through a cleansing operation. This procedure mostly deals with the removal and modification of inaccurate or incomplete records or tables from the database.

Load:

The schematic data collected from the last step will be loaded into an Online Analytical Processing (OLAP) data warehouse where it will be stored and can then be used for further analysis and business intelligence purposes.

What to Test in ETL?

The complex data that has been collated from various relational databases must be validated before it can be used for business intelligence. The main goal of ETL testing will be to discover data defects. Here are a few cases that are the most commonly tested in ETL.

Data mapping

This is the most important test case in ETL testing. We need to make sure the data lifted from the source matches that in the target database.

Searching for inaccurate or duplicate data

Incomplete or duplicate data should not be found in the target database. Therefore, testing the accuracy of data should be in the checklists.

Data Schema validation

The data schema that has come from the source must match with that in the target database, so will need to be validated. This is very important because if the schema does not match, the whole system will fail.

Data Integrity and Quality checks

Data integrity is crucial. You need to make sure that the structure and conventions of the data are accurate and consistent throughout the schema. Also, the table relations, keys, etc., should be preserved after transformation.

Verifying the Business Rules

Data imported into the target database should comply with the business rules used. The incoming data should be able to comply with the associated rules, such as a calculation formula, which should always give accurate output. This check is important as it will be actual user test of the whole system under test and whether it can do the job intended.

Testing the Performance

Performance testing is also important because some forms of data can have a negative impact on the performance of the system.

Testing the Rows and Table counts

The data count should match both the target and the source databases. If there are any mismatches, this could point to a potential bug with the ETL system implemented. So, it’s imperative that we test the number of tables, columns and the rows of data in the target database against the source data.

ETL Testing Tools

Here is a selection of ETL testing tools available on the market:

- QuerySurge is a powerful ETL testing tool that enables the test process to be fully automated. It supports various cloud databases and CI/CD processes.

- Informatica Data Validation makes ETL testing quick and easy for those with limited programming skills. It has an intuitive UI which makes the tool a popular choice for ETL testers.

- Datagaps is another powerful ETL testing tool that can perform data extraction and test case execution in parallel.

In Conclusion

As you may have learned, ETL testing is mostly a comparison of how data in the target database performs, looks and functions against the data in the source database. So understanding the source data is extremely important when you test the ETL process. If you fail to understand the source data and its business purpose, ETL testing goals will be unsuccessful. Your SQL skills will also be tested here. Hence ETL testing can be a challenging, yet extremely important process for any big enterprise application.